A long time ago I was given the chance to chose which library I would use in my projects in a 3D graphics class. I could use either PEX, the PHIGS extensions for the ubiquitous X Window system, or OpenGL, some upstart library from who knows where. I said this was a long time ago. I chose PEX due to its connection to the X Windows powerhouse. We know how that rivalry turned out.

A few proposals for handling concurrency and parallelism have made it into the standardization discussion. Two in particular have caught my attention. One calls for the inclusion of OpenMP extensions into the language. Another proposes parallel versions of the functions in which are targetable to a specific type of parallel architecture. These two appear to have the most weight behind them with none other than Oracle and IBM championing OpenMP and Microsoft and NVidia standing behind the parallel algorithms.

Are those of us who know concurrency is the future–-and aren’t convinced an investment in the future of C++ will make us the new century’s COBOL programmers before then–-presented with a similar pickle now? Could both make it into the standard, or should we pick our winner and invest in the preparation needed to take advantage? Would you even trust me if I told you which I thought would win judging from my past PEX decision?

So What Are the Proposals?

The OpenMP proposal (N3530) seeks to make the #pragma directives used in current incarnations of OpenMP into language keywords. An example is using parallelfor in place of the familiar for loop. On the pro side, according to the proposal itself, compilers already have OpenMP support. It would be relatively easy for the compiler vendors to implement. One con is that I get the feeling the standards committee prefers library solutions over adding keywords. Herb Sutter has described C++14 as an incremental update to C++11 as “the delta from 11 is pretty small but with a number of convenient fit-and-finish tidy-ups…”, so maybe that indicates sweeping changes like this won’t get far in C++14.

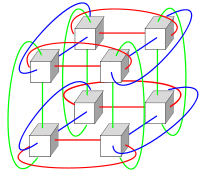

The parallel algorithms proposal (NS3554) would add a parallel execution policy to the algorithms in . There would be a default sequential policy, a parallel policy and a vectorized policy. This would give us the power to declare, for example, that our std::sort should be run parallel on multiple cores or on the GPU, or using the CPU’s SIMD facilities. This would provide a lot of bang for the buck. Microsoft with their C++ AMP and PPL, and NVidia with OpenCL in hand would be at the front of the pack of implementors. Other compiler vendors might need to do some catch up.

Okay, so I misled you, and there isn’t a great dilemma over which technology to bet on at this time. Neither of these is mutually exclusive. You can imagine that the parallel algorithms could be implemented with the help of the OpenMP extensions. Which of these, if any, will make it into the standard I have no idea. What is important is that there could be some powerful, higher level, concurrency support in the language sooner or later. I’d like to have the ability to easily set loose some cores with some STL algorithms, so if given a choice I’d prefer the parallel algorithms. Some OpenMP like support might naturally fall out of that effort anyway.

Meeting C++ has a great rundown of the papers submitted for review if you care to speculate more on what your favorite down-to-the-metal language might look like in a couple years.