Making Spectrograms in JUCE

Spectrogram of swelling trumpet sound Art+Logic’s Incubator project has made a lot of progress. In a previous post I mentioned that Dr. Scott Hawley’s technique to classify audio involved converting audio to an image and using a Convolutional Neural...

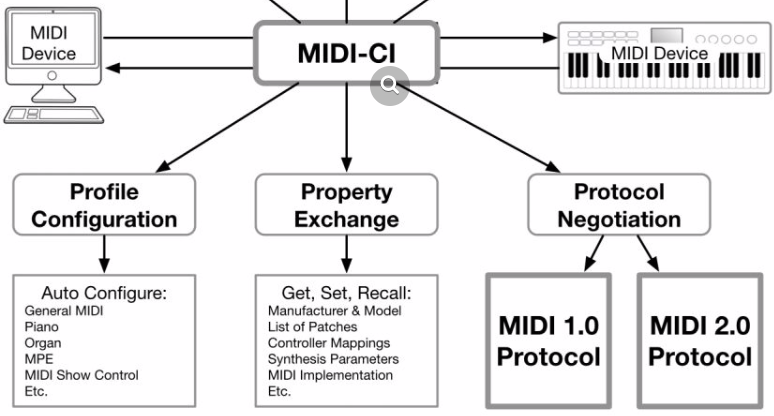

Art+Logic Talks MIDI 2.0

For the past year or so, I’ve been working as one of a group of developers within the Protocol Working Group of the MIDI Manufacturers Association to create prototype tools and applications that implement the upcoming MIDI 2.0 specification as it’s worked its way through many drafts to the point where it’s now ready to be voted on as an official standard. Read on for details on some upcoming talks I’ll be presenting on it.

Unlocking the Web Audio API

“It’s going to be a music machine – like, full keyboard and everything – but

each of the keys is going to be mapped to – wait for it – cat sounds! We’ll call

it the ‘Meowsic Machine’! Oh, and we need it to be accessible to everyone via the

Web. Which is easy, right?

You are reminded that the universe can be a cruel place.

It’s now your job to make this happen.